|

Hung-Chieh Fang I am an incoming PhD student at UCLA, co-advised by Prof. Bolei Zhou and Prof. Yuchen Cui. I received my bachelor’s degree in Computer Science from National Taiwan University, where I was fortunate to be advised by Professors Hsuan-Tien Lin, Shao-Hua Sun, and Yun-Nung (Vivian) Chen. I was also a visiting research intern at Stanford University, working with Prof. Dorsa Sadigh, and at The Chinese University of Hong Kong, where I worked with Prof. Irwin King. hcfang@ucla.edu / Google Scholar / Github / X |

|

ResearchI am interested in learning structured representations for generalist robotics. My research focuses on leveraging self-supervised learning to extract richer supervisory signals from data for policy learning and world modeling, with the goal of enabling generalization and continual learning. |

|

DexDrummer: In-Hand, Contact-Rich, and Long-Horizon Dexterous Robot Drumming

Hung-Chieh Fang, Amber Xie, Jennifer Grannen, Kenneth Llontop, Dorsa Sadigh ICRA Workshop on Dexterity with Multifingered Hands, 2026 (Spotlight Presentation) paper / website / code / poster

Manipulation often requires dexterous in-hand tool use, complex contact interactions, and stability over long horizons. We propose DexDrummer, a hierarchical sim-to-real framework that integrates these capabilities within a unified drumming testbed.

|

|

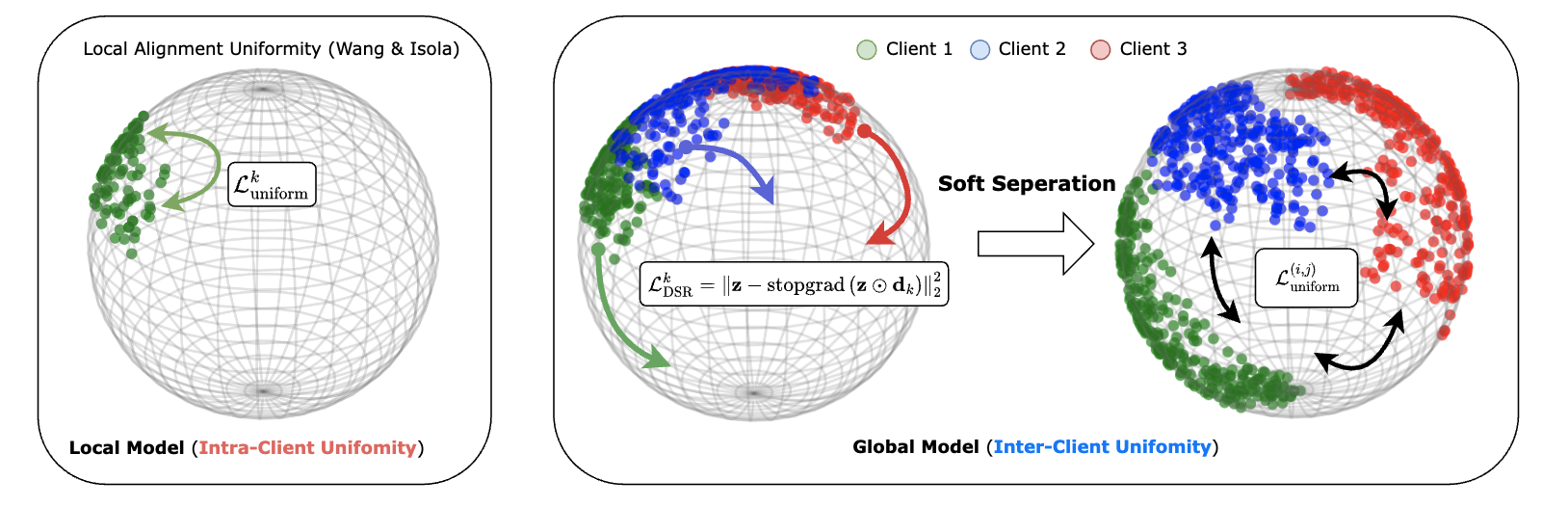

Soft Separation and Distillation: Toward Global Uniformity in Federated Unsupervised Learning

Hung-Chieh Fang, Hsuan-Tien Lin, Irwin King, Yifei Zhang ICCV 2025 paper / website / code / poster

We explore how to improve generalization under highly non-IID data distributions where representations are non-shared. We propose a plug-and-play regularizer that encourages dispersion to improve uniformity without sacrificing semantic alignment.

|

|

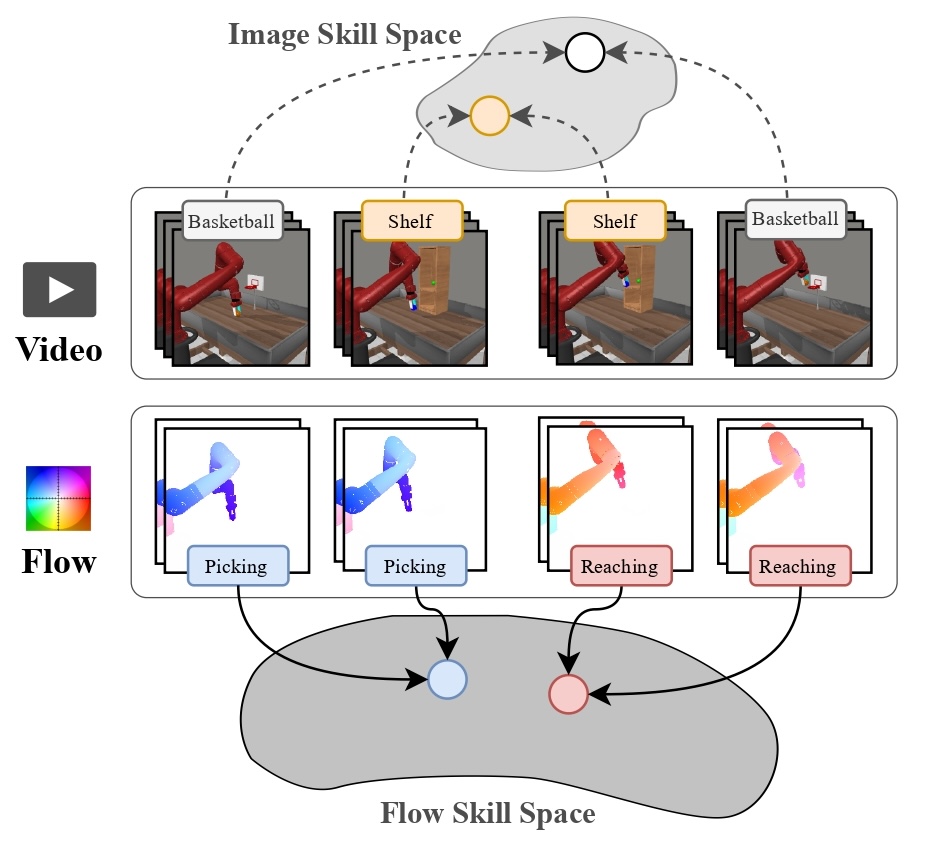

Learning Skills from Action-Free Videos

Hung-Chieh Fang*, Kuo-Han Hung*, Chu-Rong Chen, Po-Jung Chou, Chun-Kai Yang, Po-Chen Ko, Yu-Chiang Wang, Yueh-Hua Wu, Min-Hung Chen, Shao-Hua Sun ICML Workshop on Building Physically Plausible World Models, 2025 paper / website

We propose SOF, a method that leverages temporal structures in videos while enabling easier translation to low-level control. SOF learns a latent skill space through optical flow representations that better aligns video and action dynamics, thereby improving long-horizon performance.

|

|

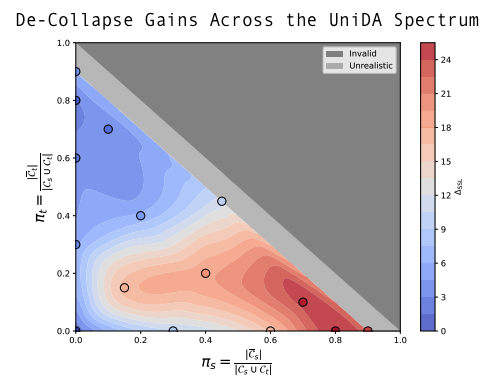

Tackling Dimensional Collapse toward Comprehensive Universal Domain Adaptation

Hung-Chieh Fang, Po-Yi Lu, Hsuan-Tien Lin ICML 2025 paper / website / poster

We study how to adapt to arbitrary target domains without assuming any class-set priors. Existing methods suffer from severe negative transfer under large class-set shifts due to the overestimation of importance weights. We propose a simple uniformity loss that increases the entropy of target representations and improves performance across all class-set priors.

|

Awards |

|

National Taiwan University

Principal’s Award for Bachelor’s Thesis, 2024 (Best Thesis in the EECS College) Dean's List Award, Fall 2024 (Top 5% of the class) |

Teaching and Service |

|

Reviewer

ICLR (2026), TMLR (2025, 2026) |

|

National Taiwan University

Teaching Assistant, EE5100: Introduction to Generative Artificial Intelligence, Spring 2024 Teaching Assistant, CSIE5043: Machine Learning, Spring 2023 |

|

This template is adapted from here. |